The Cohere Datasets API (and How to Use It)

The Cohere Datasets API (and How to Use It)

The Cohere Datasets API (and How to Use It)

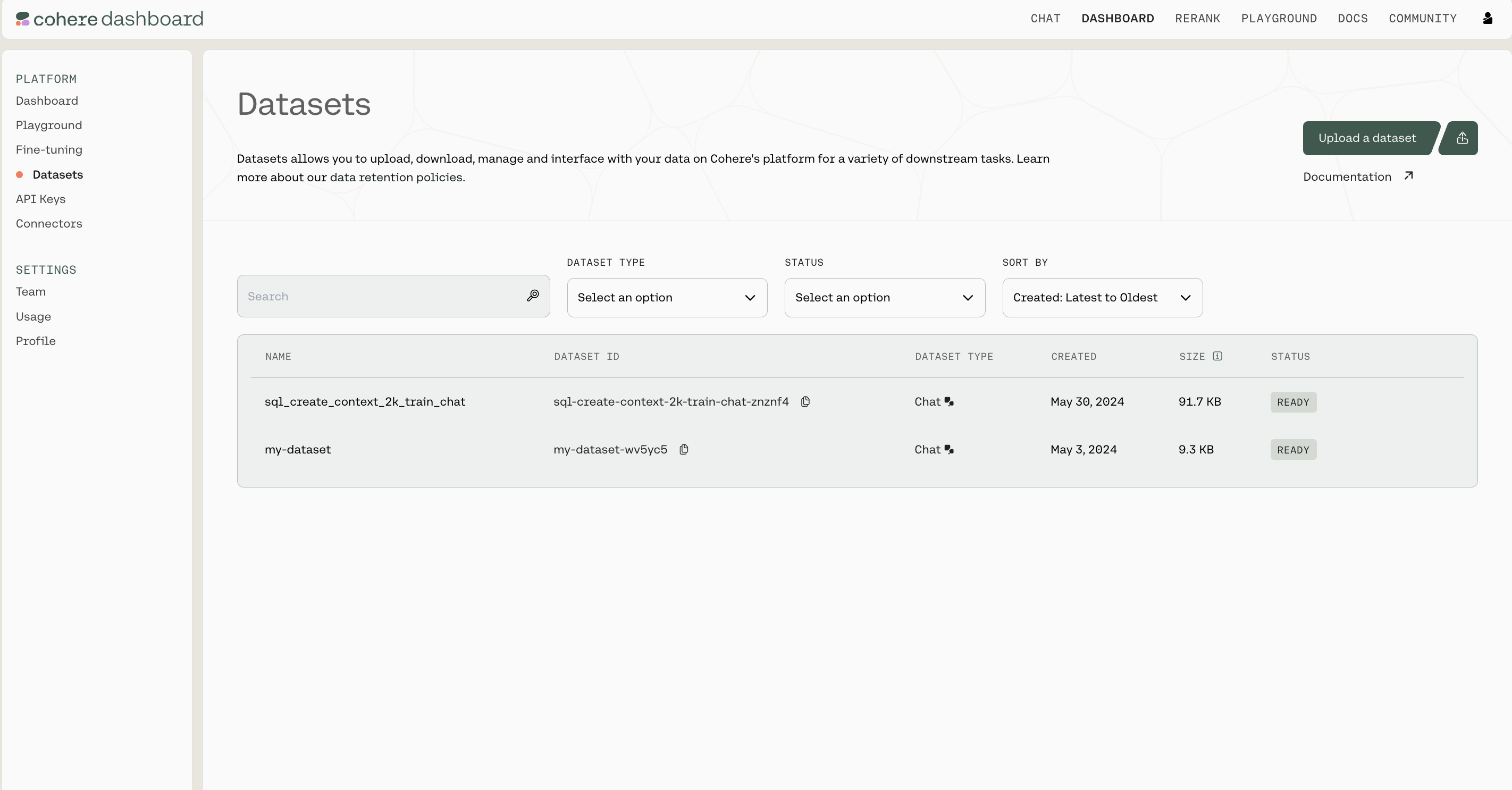

The Cohere platform allows you to upload and manage datasets that can be used in batch embedding with Embedding Jobs. Datasets can be managed in the Dashboard or programmatically using the Datasets API.

There are certain limits to the files you can upload, specifically:

You should also be aware of how Cohere handles data retention. This is the most important context:

First, let’s install the SDK

Import dependencies and set up the Cohere client.

(All the rest of the examples on this page will be in Python, but you can find more detailed instructions for getting set up by checking out the Github repositories for Python, Typescript, and Go.)

Datasets are created by uploading files, specifying both a name for the dataset and the dataset type.

The file extension and file contents have to match the requirements for the selected dataset type. See the table below to learn more about the supported dataset types.

The dataset name is useful when browsing the datasets you’ve uploaded. In addition to its name, each dataset will also be assigned a unique id when it’s created.

Here is an example code snippet illustrating the process of creating a dataset, with both the name and the dataset type specified.

Whenever a dataset is created, the data is validated asynchronously against the rules for the specified dataset type . This validation is kicked off automatically on the backend, and must be completed before a dataset can be used with other endpoints.

Here’s a code snippet showing how to check the validation status of a dataset you’ve created.

To help you interpret the results, here’s a table specifying all the possible API error messages and what they mean:

The Dataset API will preserve metadata if specified at time of upload. During the create dataset step, you can specify either keep_fields or optional_fields which are a list of strings which correspond to the field of the metadata you’d like to preserve. keep_fields is more restrictive, where if the field is missing from an entry, the dataset will fail validation whereas optional_fields will skip empty fields and validation will still pass.

As seen in the above example, the following would be a valid create_dataset call since langs is in the first entry but not in the second entry. The fields wiki_id, url, views and title are present in both JSONs.

When a dataset is created, the type field must be specified in order to indicate the type of tasks this dataset is meant for.

The following table describes the types of datasets supported by the Dataset API:

Datasets can be fetched using its unique id. Note that the dataset name and id are different from each other; names can be duplicated, while ids cannot.

Here is an example code snippet showing how to fetch a dataset by its unique id.

Datasets are automatically deleted after 30 days, but they can also be deleted manually. Here’s a code snippet showing how to do that: